Humans of Simulated New York

For our month-long DBRS Labs residency, Fei and I built the beginnings of a tool set for economy agent-based simulation. My gut feeling is the next phase of this AI renaissance will be around simulation. AlphaGo’s recent victory over the Go world champion - a landmark in AI history - resulted from a combination of deep learning and simulation techniques, and we’ll see more of this kind of hybrid.

Simulation is important to planning, a common task in AI. Here “planning” refers to any task that requires producing a sequence of actions (a “plan”) that leads from a starting state to goal state. An example planning problem might be: I need to get to London (starting state) from New York (goal state), what’s the best way of getting there? A good plan for this might be: book a flight to London, take a cab to the airport, get on the flight. But there are infinitely many other plans, depending on how detailed you want to get. I could walk and swim to London - it’s not a very good plan, but it’s a plan nonetheless!

To produce such plans, there needs to be a way of anticipating the outcome of certain actions. This is where simulation comes in. For example, in deciding how to get to the airport, I have to consider various scenarios - traffic could be bad at this particular hour, maybe there’s some chance the cab breaks down, and so on. Simulation is the consideration of these scenarios.

So simulation, especially as it relates to planning, is crucial to AI’s more interesting applications, such as policy and economic simulation which seeks to understand the implications of policy decisions. Much like machine learning, planning and simulation have a long history and are already used in many different contexts, from shipping logistics to spacecraft. The residency was a great opportunity to begin exploring this space.

First plan

The general idea was to use a simulation technique called agent-based modeling, in which a system is represented as individual agents - e.g. people - that have predefined behaviors. These agents all interact with one another to produce emergent phenomena - that is, outcomes which cannot be attributed to any individual but rather arise from their aggregate interactions. The whole is greater than the sum of its parts.

Originally we aimed to create a literal simulation of New York City and use it to generate narratives of its agents: simulated citizens (“simulants”). We wanted to produce a simulation heavily drawn from real world data, collating various data sources (census data, market data, employment data, whatever we could scrap together) and unpacking their abstract numbers into simulated lives. Data ostensibly is a compact way of representing very nuanced phenomena - it lossy-compresses a person’s life. Using simulation to play out the rich dynamics embedded within the data seemed like a good way to (re-)vivify it. It wouldn’t reach the fidelity and honesty of lived experience, but might nevertheless be better than just numbers.

Over time though we became more interested in using simulation to postulate different world dynamics and see how those played out. What would the world look like if people behaved in this way or had these values? What would happen to this group of people if the government instituted this policy? What happens to labor when technology is productive enough to replace them? What if the world had less structural inequality than it does now?

Acknowledgements

This speculative direction had many inspirations:

Past and speculated initiatives around AI and governance were one - in particular, Allende’s Cybersyn, the ambitious, way-before-its-time attempt to manage an economy via networked computation under the control of the workers, and the imagined AI-managed civilization of Iain M. Banks’ Culture. These reached for utopian societies in which many, if not all, aspects were managed by some form of artificial intelligence, presumably involving simulation to understand the societal impacts of its decisions.

Another big inspiration was speculative social science fiction such as Ursula K. Le Guin’s “Hainish Cycle”, which explores how differences in fundamental aspects of humans lead to drastically different societies. From these we aspired to create something which similarly carves out a space to hypothesize alternative worlds and social conditions.

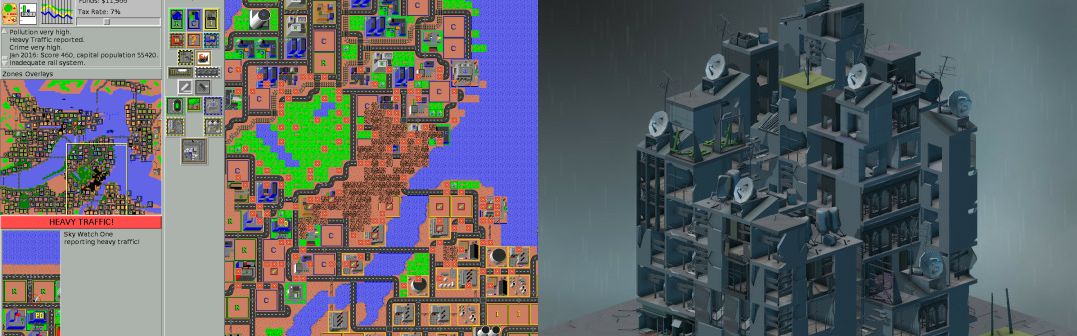

We also referred to what could be called “generative narrative” video games. In these games, no story is fixed or preordained; rather, only the dynamics of the game world are defined. Things take on a life of their own. Bay 12 Games’ Dwarf Fortress is one of the best examples of this - a meticulously detailed simulation of a colony of dwarves which almost always ends in tragedy. Dwarf Fortress has inspired other games such as RimWorld, which follows the same general structure but on a remote planet. Beyond the pragmatic applications of economic simulation, the narrative aspect produces characters and a society to be invested in and to empathize with.

In a similar vein, we looked at management simulation games, such as SimCity/Micropolis, Cities: Skylines (by Paradox Interactive, renowned for their extremely detailed sim games), Roller Coaster Tycoon, and more recently, Block’hood, from which we took direction. These games provide a lot of great design cues for making complex simulations easy to play with.

Game of Life

In both a literal and metaphorical way, these simulation games are essentially Conway’s Game of Life writ large. The Game of Life is the prototypical example of how a set of simple rules can lead to emergent phenomena of much greater complexity. Its world is divided into a grid, where each cell in the grid is an agent. Each cell has two possible states: on (alive) or off (dead). The rules which govern these agents may be something like:

- a cell dies if it has only one or no neighbors

- a cell dies if it is surrounded by four or more neighbors

- a dead cell becomes alive if it has three neighbors

How the system plays out can vary drastically depending on which cells start alive. Compare the following two - both use the same rules, but with different initial configurations (these gifs are produced from this demo of the Game of Life).

This is characteristic of agent-based models: different starting conditions and parameters can lead to fantastically different outcomes.

Navigating complexity

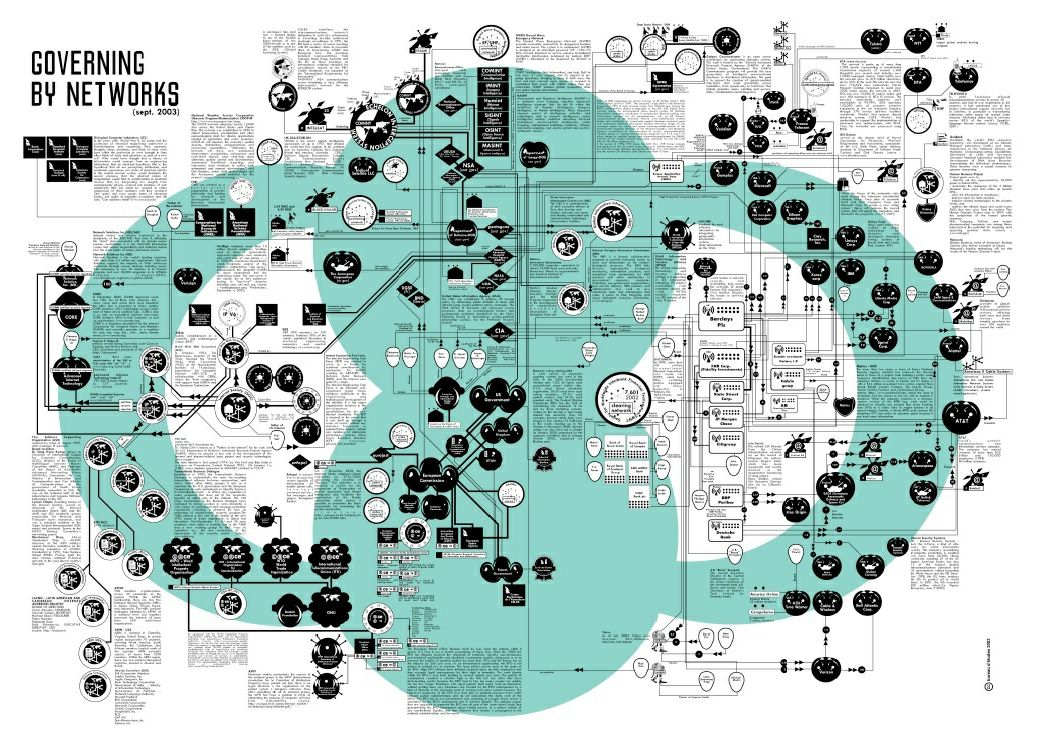

Interactive and well-designed simulation can also function as an educational tool, especially for something as complex as an economy. The dissociation between our daily experience and the abstract workings of the economy is massive. Trying to think about all its moving parts induces a kind of vertigo, and there is no single position from which the whole can be seen. While our project is not there yet, perhaps it may eventually aid in the cognitive mapping Fredric Jameson calls for in Postmodernism: “a situational representation on the part of the individual subject to that vaster and properly unrepresentable totality which is the ensemble of society’s structures as a whole”. Bureau d´Études’ An Atlas of Agendas attempts this mapping by painstakingly notating nauseatingly sprawling networks of power and influence, but it is still quite abstract, intimidating, and disconnected from our immediate experience.

Nick Srnicek calls for something similar in Accelerationism - Epistemic, Economic, Political (from Speculative Aesthetics):

So this is one thing that can help out in the current conjuncture: economic models which adopt the programme of epistemic accelerationism, which reduce the complexity of the world into aesthetic representations, which offer pragmatic purchase on manipulating the world, and which are all oriented toward the political accelerationist goals of building and expanding rational freedom. These can provide both navigational tools for the current world, and representational tools for a future world.

Yet another similar concept is “cyberlearning”, described in Optimists’ Creed: Brave New Cyberlearning, Evolving Utopias (Circa 2041) (Winslow Burleson & Armanda Lewis) as:

Today’s cyberlearning–the tight coupling of cyber-technology with learning experiences offering deeply integrated and personally attentive artificial intelligence–is critical to addressing these global, seemingly intractable, challenges. Cyberlearning provides us (1) access to information and (2) the capacity to experience this information’s implications in diverse and visceral ways. It helps us understand, communicate, and engage productively with multiple perspectives, promoting inclusivity, collaborative decision-making, domain and transdisciplinary expertise, self actualization, creativity, and innovation (Burleson 2005) that has transformative societal impact.

We found some encouragement for this approach in Modeling Complex Systems for Public Policies, which was published last year and covers the current state of economic and public policy modeling. In the preface, Scott E. Page writes:

…whether we focus our lens on the forests or students of Brazil or the world writ large, we cannot help but see the inherent complexity. We see diverse, purposeful connecting people constructing lives, interacting with institutions, and responding to rules, constraints, and incentives created by policies. These activities occur within complex systems and when the activities aggregate they produce feedbacks and create emergent patterns and functionalities. By definition, complex systems are difficult to describe, explain, and predict, so we cannot expect ideal policies. But we can hope to improve, to do better. (p. 14)

Early attempts

We had a lot of anxiety in the deciding on the simulation’s level of detail. There were a few constraints which prevent us from going too crazy, e.g. computational feasibility (whether or not we can run the simulation in a reasonable amount of time) and sensitivity/precision issues (i.e. all the problems of modeling chaotic systems). These practical concerns were offset by a desire to represent all the facets of life we were interested in, which was too ambitious. This main tension is best described by Borges’ On Exactitude in Science, where a map is so detailed that it directly overlays the terrain it is meant to represent. A large part of modeling’s value is that it does not seek a one-to-one representation of its referent, generally because it is not only impractical but because the point of a model is to capture the essence of a system without too much noisy detail. For us, the details were important, but we also avoided too literal a model to leave some space for the simulation to surprise us.

First we tried fairly sophisticated simulants, which each had their own set of utility functions. These utility functions determined, for instance, how important money was to them or how much stress bothered them. Some agents valued material wealth over mental health and were willing to work longer hours, while others valued relaxation.

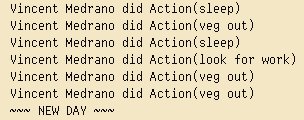

We went way too granular here. Agents would make a plan for their day, hour-by-hour, involving actions such as relaxing, looking for work, seeing friends, going to work, sleeping, visiting the doctor, and so on. Agents used A* search (a powerful search algorithm) to generate a plan they believed would maximize their utility, then executed on that plan. Some actions might be impossible from their current state - for instance, they might be sick and want to visit the doctor, but not have enough money - but agents could set these desired actions as long-term goals and work towards them.

To facilitate developing these kinds of goal-oriented agents, we built the cess framework. Because there is a lot of computation happening here, cess includes support for running a simulation across a cluster. However, even with the distributed computation, modeling agents at this level of detail was too slow for our purposes (we wanted something snappy and interactive), so we abandoned this approach (development of cess will continue separately).

A simple economy

Given that our residency was for only a month, we ended up going with something simple: a conventional agent-based model of a very basic economy, with flexibility in defining the world’s parameters. A lot of what we wanted to include had to be left out. A good deal of the final simulation was based on previous work (of which there is plenty) in economic modeling. In particular:

- Agent-based model of economics: Market mechanisms, decision making, taxation. Leonid Hulianytskyi, Diana Omelianchyk. International Journal “Information Technologies & Knowledge” Volume 9, Number 1, 2015. 25-33.

- An agent-based model of a minimal economy. Christopher K. Chan. May 5, 2008.

- A simple agent-based spatial model of the economy: tools for policy. Bernardo Alves Furtado, Isaque Daniel, Rocha Eberhardt. Feburary 9, 2016.

In addition to our simulants (the people), we also had firms, which included consumer good firms, capital equipment firms, raw material firms, and hospitals, and the government. The firms use Q-learning, as described in “An agent-based model of a minimal economy”, to make production and pricing decisions. Q-learning is a reinforcement learning technique where the agent is “rewarded” for taking certain actions and “punished” for taking others, so that they eventually know how to act under certain conditions. Here firms use this to learn when producing more or less is a good idea and what profit margins consumers will tolerate.

We still wanted to start from something resembling our world, at least to make the very tenuous claim that our simulation actually proves anything a tiny bit less tenuous. We gathered individual-level American Community Survey data from IPUMS USA and used that to generate “plausible” simulated New Yorkers. At first we tried trendy generative neural net methods like generative adversarial networks and variational autoencoders, but we weren’t able to generate very believable simulants that way. In the end we just learned a Bayes net over the data (I hope this project’s lack of neural nets doesn’t detract from its appeal 😊), which turned out pretty well.

A Bayes net allows us to generate new data that reflects real-world correlations, so we can do things like: given a Chinese, middle-aged New Yorker, what neighborhood are they likely to live in, and what is their estimated income? The result we get back won’t be “real” as in the data isn’t connected to a real person, but it will reflect the patterns in the original data. That is to say, it could be a real person.

The code we use to generate simulants is available here. This is basically what it does:

>>> from people import generate >>> year = 2005 >>> generate(year) { 'age': 36, 'education': <Education.grade_12: 6>, 'employed': <Employed.non_labor: 3>, 'wage_income': 3236, 'wage_income_bracket': '(1000, 5000]', 'industry': 'Independent artists, performing arts, spectator sports, and related industries', 'industry_code': 8560, 'neighborhood': 'Greenwich Village', 'occupation': 'Designer', 'occupation_code': 2630, 'puma': 3810, 'race': <Race.white: 1>, 'rent': 1155.6864868468731, 'sex': <Sex.female: 2>, 'year': 2005 }

This is how we spawned our population of simulants. Because we were also interested in social networks, simulants could become friends with one another. We ripped out the parameters from the logistic regression model (the “confidant model” in the graphic below) described in Social Distance in the United States: Sex, Race, Religion, Age, and Education Homophily among Confidants, 1985 to 2004 (Jeffrey A. Smith, Miller McPherson, Lynn Smith-Lovin, University of Nebraska - Lincoln, 2014) and used that to build out the social graph. This social network determined how illnesses and job opportunities spread.

The graphic below goes into the simulation design in more detail:

We designed the dynamics of the world so it could model some of the questions posed earlier. For instance, we modeled production and productive technology to explore the idea of automation. Say it takes 10 labor to produce a good, each worker produces 20 labor, and equipment adds an additional 10 labor. Now say your firm wants to produce 10 goods, which requires 100 labor. If you have no equipment, you would need five workers (5*20=100). However, if you have equipment for each worker, you only need four workers (4*(20+10)=120). (In our simulation, each piece of equipment requires one worker to operate it, so you can’t just buy 10 pieces of equipment and not hire anyone). To model a more advanced level of automation, we could instead say that each piece of equipment now produces 100 labor, and now to meet that product quota, we only need one worker (1*(20+100)=120). Then we just hit play and see what happens to the world.

Steering the city

As Ava Kofman points out in “Les Simerables”, these kinds of simulations embed their creators’ assumptions about how the world does or should work. Discussing the dynamics of SimCity, she notes:

To succeed even within the game’s fairly broad definition of success (building a habitable city), you must enact certain government policies. An increase in the number of police stations, for instance, always correlates to a decrease in criminal activity; the game’s code directly relates crime to land value, population density, and police stations. Adding police stations isn’t optional, it’s the law.

Or take the game’s position on taxes: “Keep taxes too high for too long, and the residents may leave your town in droves. Additionally, high-wealth Sims are more averse to high taxes than low- and medium-wealth Sims.”

The player’s exploration of utopian possibility is limited by these parameters. The imagination extolled by Wright is only called on to rearrange familiar elements: massive buildings, suburban quietude, killer traffic. You start each city with a blank slate of fresh green land, yet you must industrialize.

The landscape is only good for extracting resources, or for being packaged into a park to plop down so as to increase the value of the surrounding real estate. Certain questions are raised (How much can I tax wealthy residents without them moving out?) while others (Could I expropriate their wealth entirely?) are left unexamined.

These assumptions seem inevitable - something has to glue together the interesting parts - but they can be designed to be transparent and mutable. Unlike the assumptions with which we operate daily, these assumptions must be made explicit through code. Keeping all of this in mind, we wanted to make the simulation interactive in such a way that you can alter the fundamental parameters which govern the economy’s dynamics. But, while you can tweak numbers of the system, you can’t yet change the rules themselves. That’s something we’d like to add down the line.

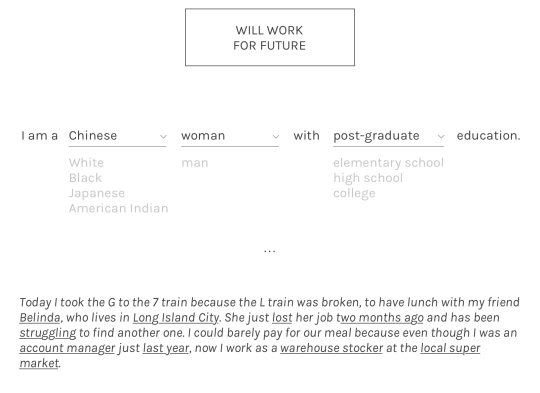

We played around with a few ideas for making the simulation interactive. At first we thought to have individuals create their own characters, specifying attributes such as altruism and frugality. Then we would run the player’s simulant through a year of their life and generate a short narrative about what happened. How the simulant behaves is dependent on the attributes the player input, as well as data-derived environmental factors. One of our goals was to model structural inequality and oppression, so depending on who you are, you may be, for instance, more or less likely to be hired.

World building was a very important component to us. By keeping all player-created individuals as part of the population for future players, the world is gradually shaped to reflect the values of all the people who have interacted with it. (Unfortunately we didn’t have time to implement this yet.)

We didn’t quite go that route in the end. Because we’ll demo the simulation to an audience, we wanted to design for that format - simultaneous participation. In the latest version, players propose and vote on new legislation between each simulated month and try to produce the best outcome (in terms of quality of life) for themselves and/or everyone.

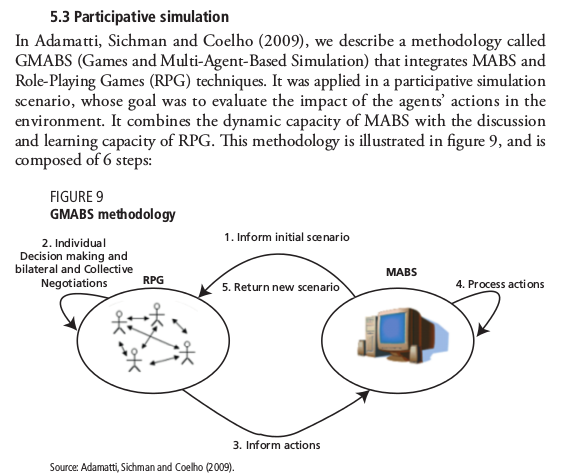

We found in Modeling Complex Systems for Public Policies that this approach is called “participative simulation”:

Visualizing disparity

The simulants in the city are meant to visualize structural inequality, as derived from American Community Survey and New York unemployment data (a full list of data sources is available in the project’s GitHub repo). We borrowed from Flatland’s hierarchy of shapes and made it so that polygon count correlates with economic status. So in our world, pyramids are unemployed, cubes are employed, and spheres are business owners. The simulant shapes are then colored according to Census race categories. It becomes pretty clear that certain colors have more spheres than others.

The city’s buildings also convey some information - each rectangular slice represents a different business, and each color corresponds to a different industry (raw material firms, consumer good firms, capital equipment firms, and hospitals). The shifting colors and height of the city becomes an indicator of economic health and priority - as sickness spreads, hospitals spring up accordingly.

For other indices, we fall back to basic line charts. An important one is the quality of life chart, which gives a general indication about how content the citizens are. Quality of life is computed using food satisfaction (if they can buy as much food - the consumer good in our economy - as they need) and health.

In many scenarios, the city collapses under inflation and gradually slouches into destitution. One by one its simulants blink out of existence. It’s really hard to strike the balance for a prosperous city. And the way the economy organized - market-based, with the sole guiding principle of expanding firm profits - is not necessarily conducive to that kind of success.

Mere speculation

Players can choose from a few baked-in scenarios along the axes of food, technology, and disease:

- food

- a bioengineered super-nutritional food is available

- “regular” food is available

- a blight leaves only poorly nutritional food

- technology

- hyper-productive equipment is available

- “regular” technology is available

- a massive solar flare from the sun disables all electronic equipment

- disease

- disease has been totally eliminated

- “regular” disease

- an extremely infectious and severe disease lurks

So people can see how things play out in a vaguely utopian, dystopian, or “neutral” (closer to our world) setting. Sometimes these scenarios play out as you’d expect - the infectious disease scenario wipes out the population in a month or two - but not always. Hyper-productive equipment, for instance, can lead to misery, unless other parameters (such as government) are collectively adjusted by players.

Where could this go?

These simulations are promising in domains like public policy - with movements like “smart cities”, it seems inevitable that this application will become ubiquitous - but their potential is soured by the reality of how policy and technological decisions are made in practice. Technological products tend to reproduce the power dynamics that produced them. As alluded to in Eden Medina’s piece, Cybersyn could have easily been implemented as a top-down control system instead of something which the workers actively participated in and took some degree of ownership over.

So it could go either way, really.

To me the value of these simulations is as a means to speculate about what the world could be like, to see how much better (or worse) things might be given a few changes in our behavior or our relationships or our environment. We seem to be reaching a high-water mark of stories about dystopia (present and future), and it has been harder for me to remember that “another world is possible”. Our project is not yet radical enough in the societies it can postulate, but we hope that these simulations can serve as a reminder that things could be different and provide a compelling vision of a better world to work towards.

A big thank you to Amelia Winger-Bearskin and the rest of the DBRS Labs folks for their support and to Jeff Tarakajian for answering our questions about the Census!